|

Facebook maximized engagement in its News Feed with news stories, then cornered the majority of ad dollars, squeezing media companies (some countries like Australia now demand Facebook pay media companies for this practice). Google’s Featured Snippets extracted the most valuable content out of some sites, making them practically obsolete. The recent popularity of AI tools raises questions about consent and ownership that are as old as the internet. And, frankly, it doesn't bring any direct benefit to me.” A “robots.txt” file tells search engine crawlers like Google which part of a site the crawler can access in order to prevent it from overloading the site with requests. It seems to be deliberately set up to ignore the directives website owners have in place. And most bots respect the robots.txt directive. “But, more importantly, Google's bot is respectful and doesn't hammer my site. “I directly benefit from search engines as they drive useful traffic to me,” Eden told Motherboard. “I had to pay to scale up my server, pay extra for export traffic, and spent part of my weekend blocking the abuse caused by this specific bot.”īeaumont also defended img2dataset by comparing it to the way Google indexes all websites online in order to power its search engine, which benefits anyone who wants to search the internet.

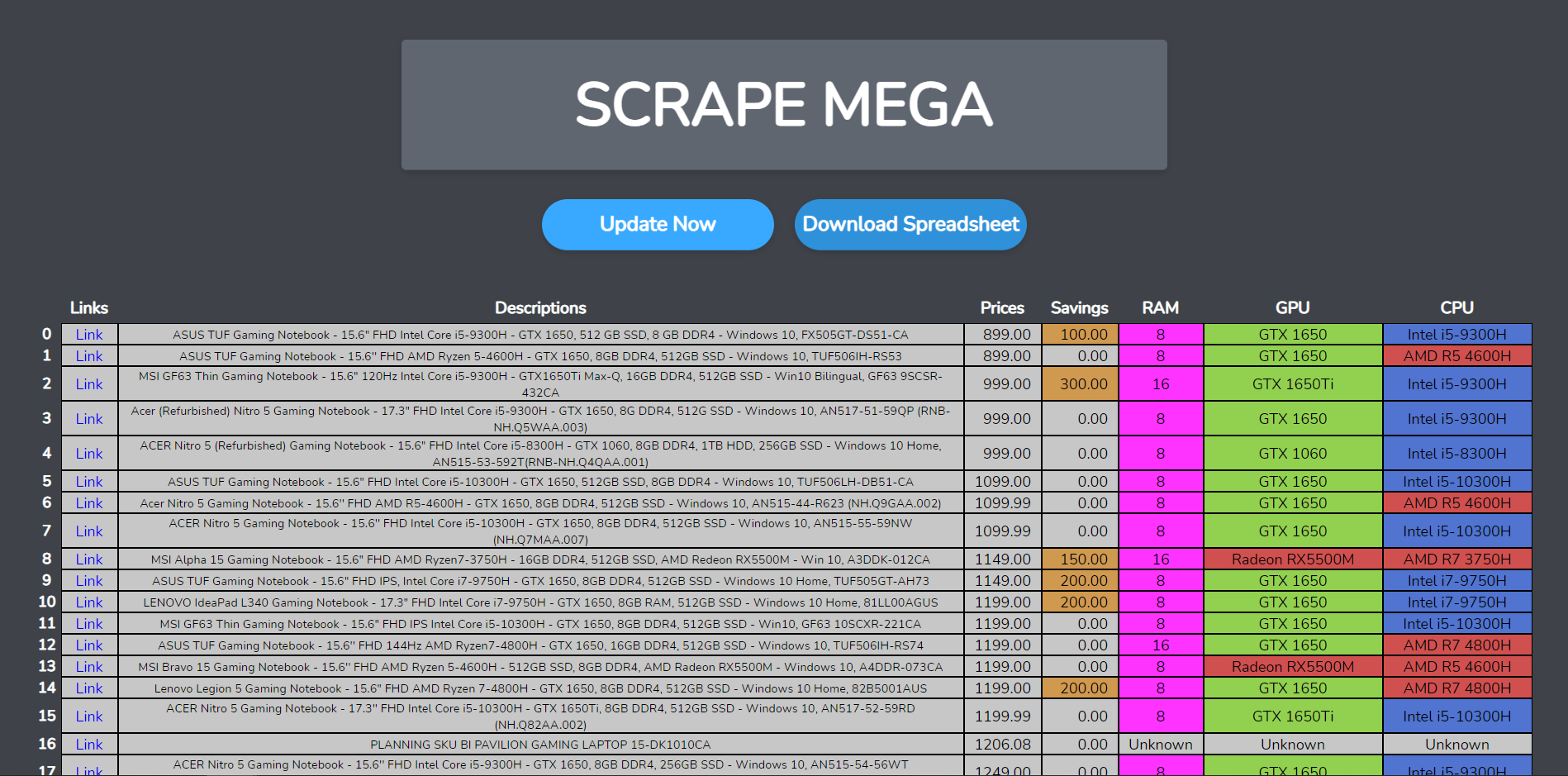

“I noticed because I received an alert from my host that the site was under a sustained attack,” Eden said. Currently, OpenBenches has mapped 27,629 benches, and hosts 250GB of photos. It seems you're trying to decide for million of other people without asking them for their consent.”Įden told Motherboard in an email that he noticed img2dataset was scraping his site, OpenBenches, which invites users to upload pictures and locations of memorial benches from across the world. You can give your consent for anything if you wish. “Letting a small minority prevent the large majority from sharing their images and from having the benefit of last gen AI tool would definitely be unethical yes,” he said on Github. When Eden and other Github commenters pushed back, Beaumont said it would be “unethical” to make img2dataset opt-in rather than opt-out. Beaumont did not respond to a request for comment. “If you don't wish for people to view images from your website, the best way is to turn it off,” Beaumont replied. “Please can you change the default behaviour so that it will only work on sites which set the X-Robots-Tag: YesAI?”

“I don't understand why the onus is on me to add a new header to my sites opting out of this tool,” Eden said. On Sunday, Terence Eden posted a comment on the Github page, saying that the tool “hammered” several of his sites and requesting that it be made opt-in. Img2dataset will attempt to scrape images from any site unless site owners add https headers like “X-Robots-Tag: noai,” and “X-Robots-Tag: noindex.” That means that the onus is on site owners, many of whom probably don’t even know img2dataset exists, to opt out of img2dataset rather than opt in. Beaumont is also an open source contributor to LAION-5B, one of the largest image datasets in the world that contains more than 5 billion images and is used by Imagen and Stable Diffusion.

The result is an image dataset, the kind that trains image-generating AI models like Open AI’s DALL-E, the open source Stable Diffusion model, and Google’s Imagen. Img2dataset is a free tool Beaumont shared on GitHub which allows users to automatically download, and resize a list of URLs.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed